Graphics HW and SW Stack¶

This section provides a more detailed illustration of animation accross the software and hardware stacks on both CPU and GPU, and explains how data flows between the CPU, the GPU, and each layer of the software stack.

In the previous section section 3D Modeling, described what information 3D models store and how this information is used to perform animation.

In the incoming section SW Stack and Data Flow will describe how each frame is generated to display the movement animation or skinning effects using the small animation parameters stored in 3D model and sent from CPU.

The the incoming section Role and Purpose of Shaders will explain different visual effects can be achieved by switching shaders to shapplying different materials across frames.

Reference:

HW Block Diagram¶

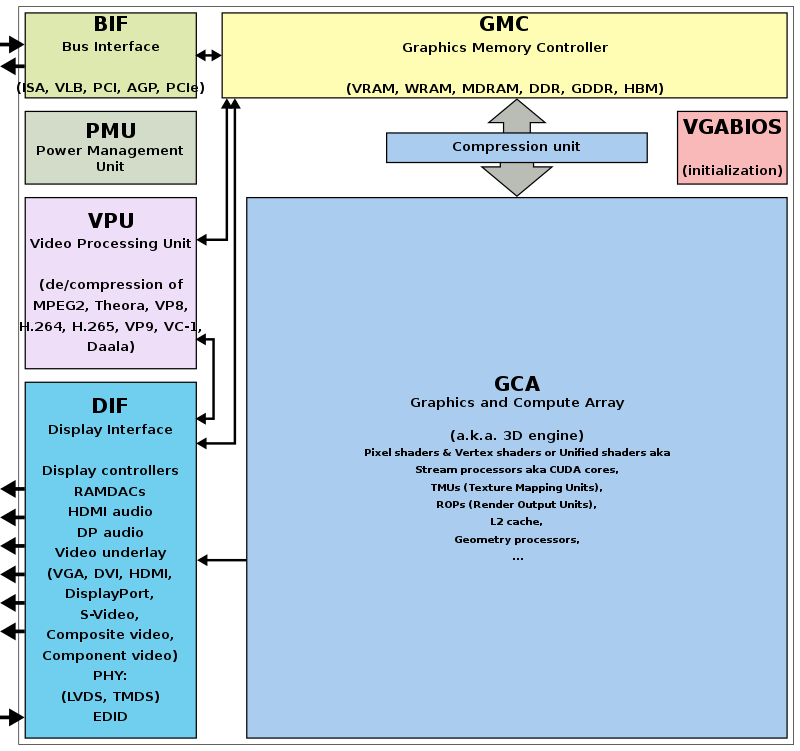

The block diagram of the Graphic Processing Unit (GPU) is shown in Fig. 5.

Fig. 5 Components of a GPU: GPU has accelerated video decoding and encoding [1]¶

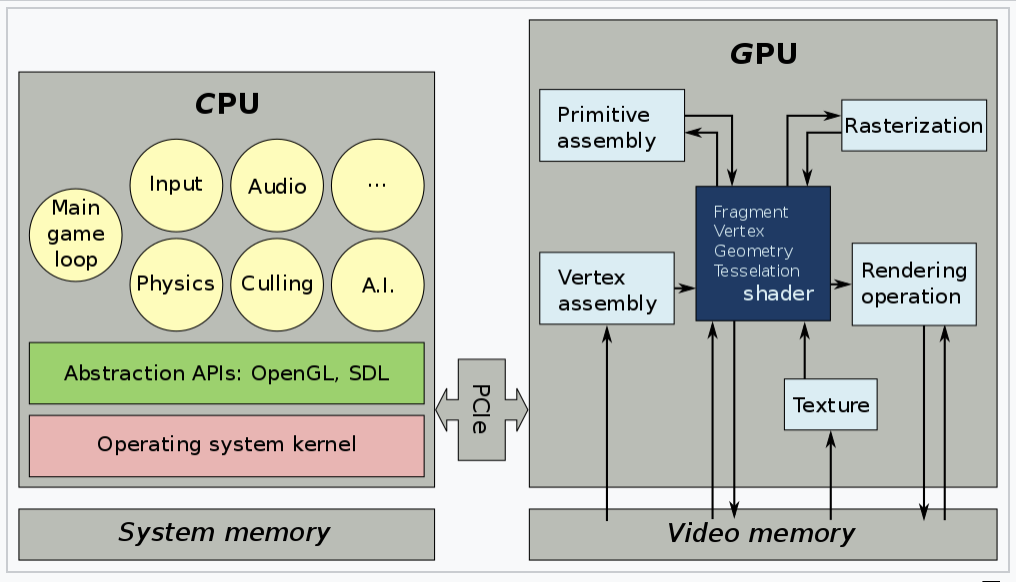

The roles of the CPU and GPU in graphic animation are illustrated in Fig. 6.

Fig. 6 OpenGL and Vulkan are both rendering APIs. In both cases, the GPU executes shaders, while the CPU executes everything else [2].¶

GPU can’t directly read user input from, say, keyboard, mouse, gamepad, or play audio, or load files from a hard drive, or anything like that. In this situation, cannot let GPU handle the animation work [3].

A graphics driver consists of an implementation of the OpenGL state machine and a compilation stack to compile the shaders into the GPU’s machine language. This compilation, as well as pretty much anything else, is executed on the CPU, then the compiled shaders are sent to the GPU and are executed by it. (SDL = Simple DirectMedia Layer) [4].

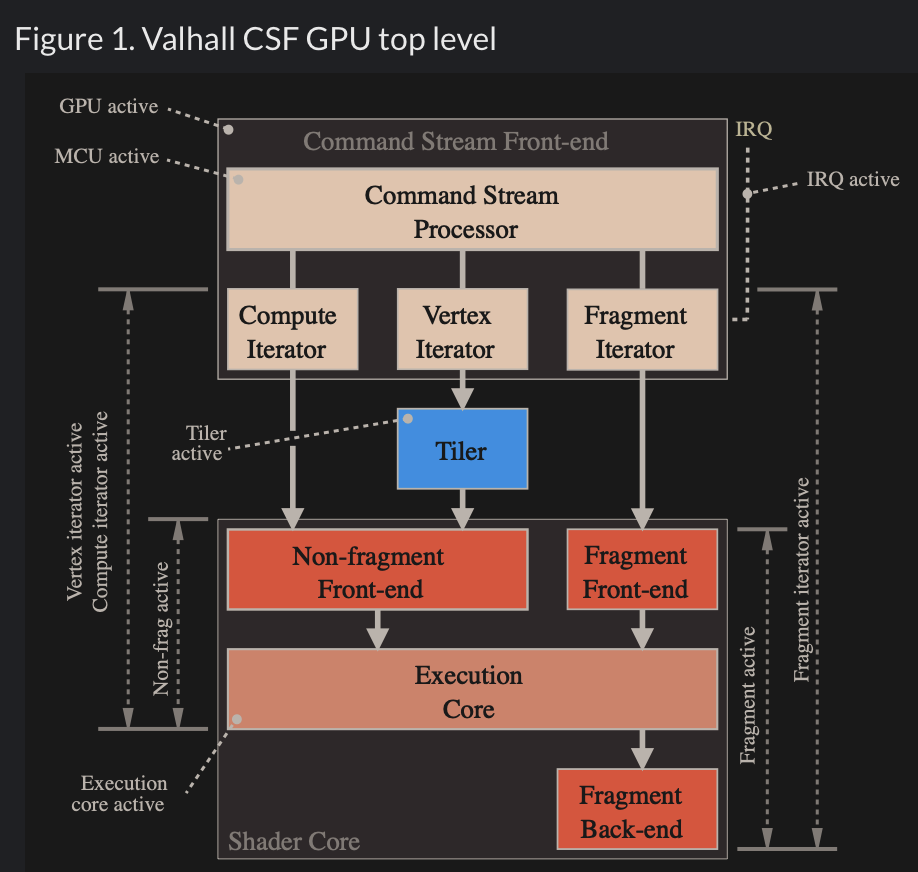

Fig. 7 MCU and specific HW circuits to speedup the processing of CSF (Command Stream Fronted) [5].¶

The GPU driver write command and data from CPU to GPU’s system memory through PCIe. These commands are called Command Stream Fronted (CSF) in the memory of GPU. A chipset of GPU includes tens of SIMD processors (cores). In order to speedup the GPU driver’s processing, the CSF is designed to a simpler form. As result, GPU chipset include MCU (Micro Chip Unit) and specfic HW to transfer the CSF into individual data structure for each SIMD processor to execute as Fig. 7. The firmware version of MCU is updated by MCU itself usually.

SW Stack and Data Flow¶

The driver runs on the CPU side as shown in Fig. 8. The OpenGL API eventually calls the driver’s functions, and the driver executes these functions by issuing commands to the GPU hardware and/or sending data to the GPU.

Even so, the GPU’s rendering work, which uses data such as 3D vertices and colors sent from the CPU and stored in GPU or shared memory, consumes more computing power than the CPU.

![digraph G {

rankdir=LR;

compound=true;

node [shape=record];

subgraph cluster_cpu {

label = "CPU (Client)";

CPU_SW [label=" 3D Model | Game Engine | { OpenGL API | Shaders \n (buitin-functions)} | <f1> Driver"];

}

subgraph cluster_gpu {

label = "GPU HW (Server)";

subgraph cluster_gpu_sw {

label = "3D Rendering pipeline \ndescribed in the later section";

ModelData [label="3D Model Information\n(VAO, VBO, textures,\nindex buffers, materials)"];

}

}

CPU_SW:f1 -> ModelData [label="1. Creating Mesh:\nVAO, texture, ..., from 3D model, \n shader-exectuable-code."];

// label = "Graphic SW Stack";

}](_images/graphviz-b6dd6ba2fc8a36ab296e2134b61d3186be2f4273.png)

Fig. 8 Graphic SW Stack and data flow in initializing graphic model¶

✅ As section Animation and Fig. 8. The game engine’s built‑in C++ renderer handles all OpenGL/Vulkan/Metal calls automatically. Users set the value for speed, velocity, …, etc, customize the animation logic.

After the user creates a skeleton and textures for each model and sets keyframe times using a 3D modeling tool, users can write gameplay scripts (Java code, C#, Blueprints, GDScript, Python, etc.) to tell the engine to play animations [6].

As section Node-Editor (shaders generator), the skin materials created by Graphics Designers / Technical Artists and secondly created by Software Programmers / Graphics Programmers using the tool Node-Editor (shaders generator). As result, shaders generated from tool Node-Editor (shaders generator).

Shaders may call built-in functions written in Compute Shaders, SPIR-V, or LLVM-IR. LLVM libclc is a project for OpenCL built-in functions, which can also be used in OpenGL [7]. Like CPU built-ins, new GPU ISAs or architectures must implement their own built-ins or port them from open source projects like libclc.

The 3D model on the CPU performs these animations in movement and others by computing each frame from the stored keyframes, as illustrated in animation section Animation.

![digraph G {

rankdir=LR;

compound=true;

node [shape=record];

subgraph cluster_cpu {

label = "CPU (Client)";

CPU_SW [label=" 3D Model | Game Engine | { OpenGL API | Shaders \n (buitin-functions)} | <f1> Driver"];

}

subgraph cluster_gpu {

label = "GPU HW (Server)";

subgraph cluster_gpu_sw {

label = "3D Rendering pipeline \ndescribed in the later section";

ModelData [label="3D Model Information\n(VAO, VBO, textures,\nindex buffers, materials)"];

UniformUpdates [label="Uniform Updates\n(bone matrices, morph weights,\nmaterial params, time, etc.\nsee Note below)", style=filled, fillcolor=lightgreen, color="black"];

}

}

CPU_SW:f1 -> UniformUpdates [label="2. Animation: \nDraw command and Uniform Updates\nfor each frame rendering"];

// label = "Graphic SW Stack";

}](_images/graphviz-f2e2504388613c7d30714a3ce016f85f01c6e9c2.png)

Fig. 9 Graphic SW Stack and data flow in rendering¶

The per-frame data is not the full set of vertices, but rather a small set of animation parameters named Uniform Updates as appeared in Fig. 9, which are described later.

Note

Bone matrices determine the positions of triangles within a 3D model during animation. This bone transformation data is much smaller than the complete mesh of the 3D model. We will provide an example and explain this in more detail in the Animation Example section. Because this transformation data is small and constant across all shader pipeline stages, it is stored in the GPU’s global memory and can be cached in the uniform/constant cache for performance, as illustrated in Fig. 64 of Processor Units and Memory Hierarchy in NVIDIA GPU 15 section.

The CPU updates only these small animation parameters and issues draw command to the GPU server side.

![digraph GPU_Pipeline {

rankdir=LR;

node [shape=box, style=rounded, fontsize=12];

UniformUpdates [label="Uniform Updates\n(bone matrices, morph weights,\nmaterial params, time, etc.)"];

ModelData [label="3D Model Information\n(VAO, VBO, textures,\nindex buffers, materials)"];

GPURendering [label="GPU Rendering\n(vertex shader, fragment shader,\nskinning, morphing, rasterization)"];

Framebuffer [label="Framebuffer\n(Video Memory Output)"];

UniformUpdates -> GPURendering;

ModelData -> GPURendering;

GPURendering -> Framebuffer [label="Rendered Image"];

}](_images/graphviz-37a321bdcd28451be5fec25bf6793c04f4e507b1.png)

Fig. 10 The input and output for GPU rendering¶

Next, the 3D Rendering-pipeline is illustrated in Fig. 10.

The shape of object data are stored in the form of VAOs (Vertex Array Objects) in OpenGL. This will be explained in a later section OpenGL. Additionally, OpenGL provides VBOs (Vertex Buffer Objects), which allow vertex array data to be stored in high-performance graphics memory on the server side GPU and enable efficient data transfer [8] [9].

After GPU receives the Uniform Updates from CPU, it performs the computationally intensive per‑vertex work within the rendering pipeline to generate the final pixel values for each frame displayed on screen. These final pixel values are collectively referred to the Rendered Image.

✅ “Rendered Image” = the final per‑frame output written into the framebuffer. The Uniform Updates will be described in detail later.

✅ CPU only updates small animation parameters named Uniform Updates as appeared in Fig. 9; GPU computes the heavy per‑vertex work.

As mentioned in the previous section on animation movement, 3D modeling tools store Keyframes, bone transforms at each keyframe and related data, and perform animation based on this information.

The CPU updates only the bone transformation data …, rather than updating the entire vertex or mesh data for each animation frame. These updates are very small—on the order of kilobytes rather than megabytes. For each rendered frame, the CPU sends these small updates to the GPU, and the GPU takes over the animation work from the CPU. This type of movement animation is called skinning, and is illustrated as follows:

Skinning

Skinning is a vertex deformation technique used to animate a mesh by attaching its vertices to a hierarchical skeleton (bones). Each vertex stores one or more bone indices and corresponding weights that describe how strongly each bone influences that vertex.

During animation, the application updates the bone transformation matrices. The vertex shader then computes the final vertex position by blending the transformed positions according to the stored weights. This allows the mesh to bend, twist, and deform smoothly as the skeleton moves.

Skinning does not create new geometry or smooth the surface topology. It only transforms the existing vertices of the mesh. Examples include bending an arm, flapping a wing, or deforming a flexible tube as its bones rotate.

CPU only update high‑level animation state, such as:

Current animation time

Bone matrices (small)

Morph weights

Material parameters

Particle emitter settings

Global uniforms (camera, lights, etc.)

These are tiny updates — kilobytes, not megabytes.

In practice (real engines): the weights are normalized so the sum = 1.0 \(\Rightarrow \sum_{i=0}^{N-1}\mathbf{weight}_i = 1.0\)

Example: Bending an Arm

Imagine a character’s arm mesh. Each vertex in the elbow area has weights like:

70% influenced by upper‑arm bone

30% influenced by lower‑arm bone

When the elbow bends:

Upper‑arm bone rotates

Lower‑arm bone rotates

GPU blends the influence

The elbow area deforms smoothly

This is skinning.

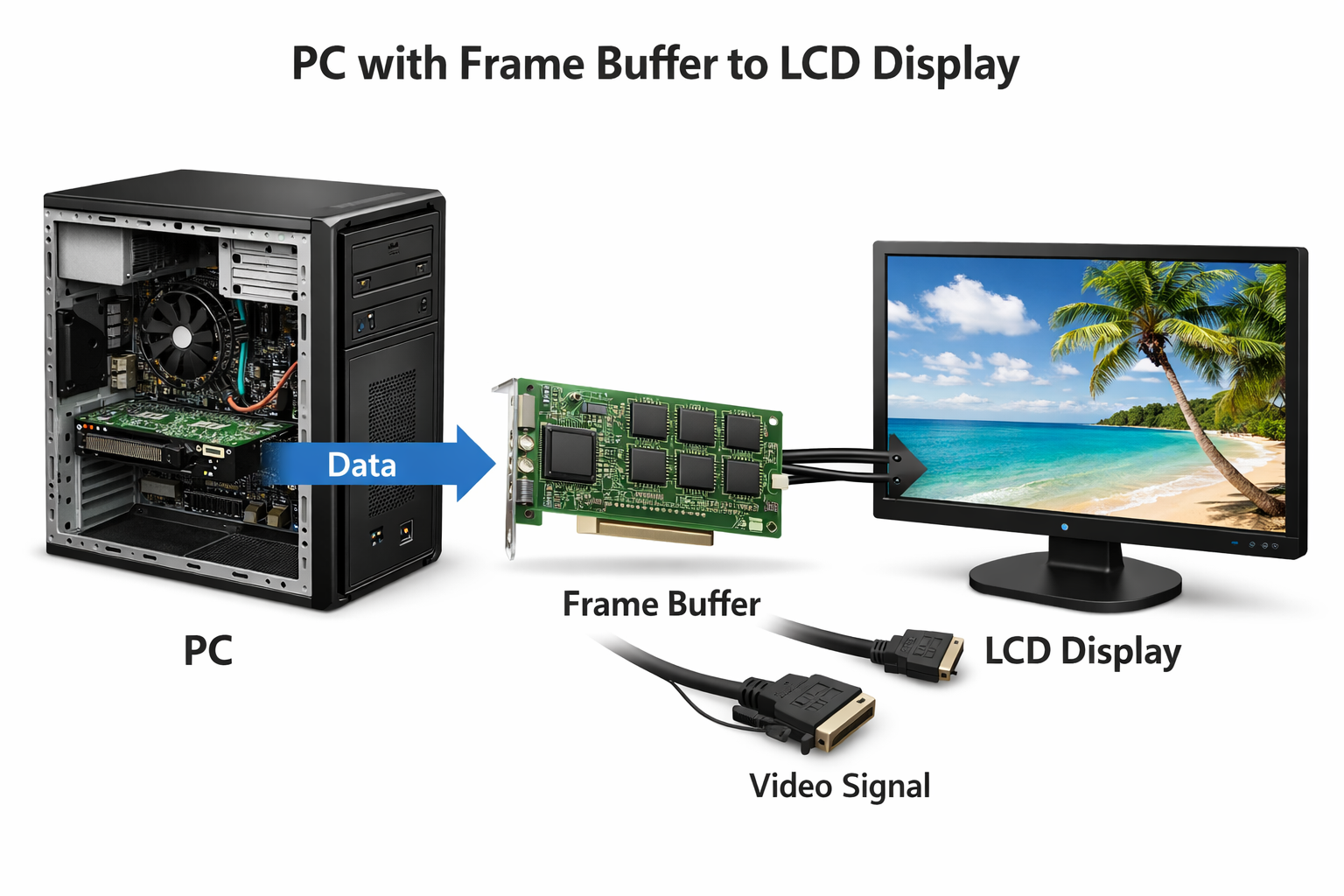

✅ After the GPU animation, the color pixels are write to framebuffer (video memory). The display device (monitor, LCD, OLED, etc.) fetches these pixels and displays them on the screen. The interface between framebuffer and display device is explained in the next section Pixels Displaying.

Pixels Displaying¶

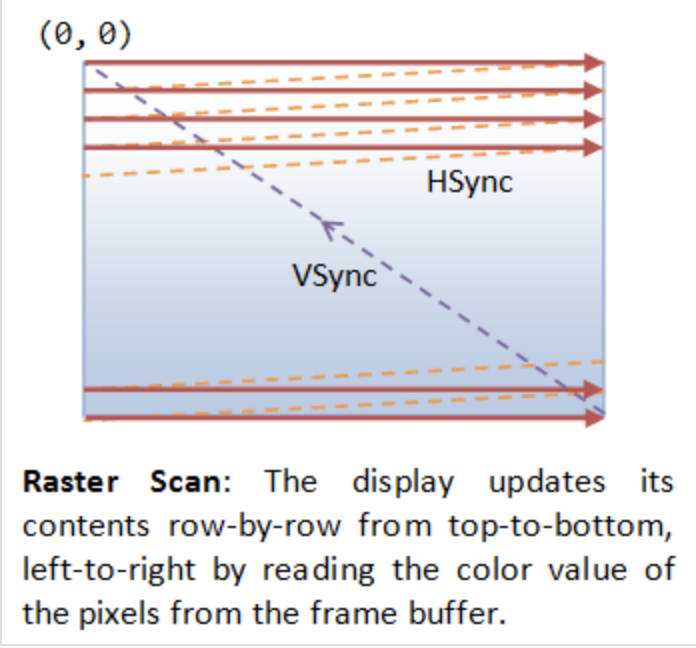

The interface between frame buffer and displaying device is shown as Fig. 11.

Fig. 11 PC with Frame Buffer to LCD Display¶

GPU and screen (monitor, LCD, OLED, etc.) use VSync, NVIDIA G-SYNC or AMD FreeSync to prevent screen tearing, as described below:

Fig. 12 VSync¶

VSync

No tearing occurs when the GPU and display operate at the same refresh rate,

since the GPU refreshes faster than the display as shown below.

A B

GPU | ----| ----|

Display |-----|-----|

B A

Tearing occurs when the GPU has exact refresh cycles but VSync takes

one more cycle than the display as shown below.

A

GPU | -----|

Display |-----|-----|

B A

To avoid tearing, the GPU runs at half the refresh rate of the display,

as shown below.

A B

GPU | -----| | -----|

Display |-----|-----|-----|-----|

B B A A

Double Buffering

While the display is reading from the frame buffer to display the current frame, we might be updating its contents for the next frame (not necessarily in raster-scan manner). This would result in the so-called tearing, in which the screen shows parts of the old frame and parts of the new frame. This could be resolved by using so-called double buffering. Instead of using a single frame buffer, modern GPU uses two of them: a front buffer and a back buffer. The display reads from the front buffer, while we can write the next frame to the back buffer. When we finish, we signal to GPU to swap the front and back buffer (known as buffer swap or page flip).

VSync

Double buffering alone does not solve the entire problem, as the buffer swap might occur at an inappropriate time, for example, while the display is in the middle of displaying the old frame. This is resolved via the so-called vertical synchronization (or VSync) at the end of the raster-scan. When we signal to the GPU to do a buffer swap, the GPU will wait till the next VSync to perform the actual swap, after the entire current frame is displayed.

As above text digram. The most important point is: When the VSync buffer-swap is enabled, you cannot refresh the display faster than the refresh rate of the display!!! If GPU is capable of producing higher frame rates than the display’s refresh rate, then GPU can use fast rate without tearing. If GPU has same or less frame rates then display’s and you application refreshes at a fixed rate, the resultant refresh rate is likely to be an integral factor of the display’s refresh rate, i.e., 1/2, 1/3, 1/4, etc. Otherwise it will cause tearing [10].

NVIDIA G-SYNC and AMD FreeSync

If your monitor and graphics card both in your customer computer support NVIDIA G-SYNC, you’re in luck. With this technology, a special chip in the display communicates with the graphics card. This lets the monitor vary the refresh rate to match the frame rate of the NVIDIA GTX graphics card, up to the maximum refresh rate of the display. This means that the frames are displayed as soon as they are rendered by the GPU, eliminating screen tearing and reducing stutter for when the frame rate is both higher and lower than the refresh rate of the display. This makes it perfect for situations where the frame rate varies, which happens a lot when gaming. Today, you can even find G-SYNC technology in gaming laptops!

AMD has a similar solution called FreeSync. However, this doesn’t require a proprietary chip in the monitor. In FreeSync, the AMD Radeon driver, and the display firmware handle the communication. Generally, FreeSync monitors are less expensive than their G-SYNC counterparts, but gamers generally prefer G-SYNC over FreeSync as the latter may cause ghosting, where old images leave behind artifacts [11].

The Role and Purpose of Shaders¶

The flow for 3D/2D graphic processing is shown in Fig. 13.

![digraph G {

rankdir=LR;

compound=true;

node [shape=record];

subgraph cluster_3d {

label = "3D/2D modeling software";

subgraph cluster_code {

label = "3D/2D's code: engine, shader, ...";

Api [label="<a> OpenGL API | <s> Shaders (3D animation's shaders \n or programmer writing shaders"];

}

}

subgraph cluster_driver {

label = "Driver"

Compiler [label="On-line Compiler"];

Obj [label="obj"];

Linker [label="On-line binding (Linker)"];

Exe [label="exe"];

}

Api:a -> Obj [lhead ="cluster_driver"];

Api:s -> Compiler;

Compiler -> Obj -> Linker -> Exe;

Exe -> GPU;

Exe -> CPU [ltail ="cluster_driver"];

// label = "OpenGL Flow";

}](_images/graphviz-cbf072161c9690c7ffe5ebb3818fbce8375eb85c.png)

Fig. 13 OpenGL Flow¶

The compiled shaders are sent to the GPU when you call glLinkProgram(). That is the moment the driver uploads the compiled shader binaries into GPU‑executable form.

The glLinkProgram() is called when you finish preparing a shader program — not when creating a mesh, and not when issuing a draw command.

When a game actually call glLinkProgram() to re-link the shader, the shader need to be compiled and load to GPU.

Usually it is happend in game startup, level load, or creating a new shader variant (e.g., enabling shadows, fog, skinning).

Games switch shaders constantly — sometimes hundreds of times per frame — but they do not re‑link them.

When playing a video game, different materials, effects and rendering passes will applying to difference shaders.

Examples of switching shaders:

When the player enters a snowy biome, ice meshes use the ice shader.

The axe blade uses a metal PBR shader. Sparks fly when the axe blade hits stone it switch to particle shader.